Modified Richardson iteration is an iterative method for solving a system of linear equations. Richardson iteration was proposed by Lewis Richardson in his work dated 1910. It is similar to the Jacobiand Gauss–Seidel method.

We seek the solution to a set of linear equations, expressed in matrix terms as

The Richardson iteration is

where ω is a scalar parameter that has to be chosen such that the sequence x(k) converges.

It is easy to see that the method is correct, because if it converges, then  and x(k) has to approximate a solution of Ax = b.

and x(k) has to approximate a solution of Ax = b.

and x(k) has to approximate a solution of Ax = b.

and x(k) has to approximate a solution of Ax = b.

Convergence

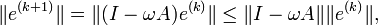

Subtracting the exact solution x, and introducing the notation for the error  , we get the equality for the errors

, we get the equality for the errors

, we get the equality for the errors

, we get the equality for the errors- e(k + 1) = e(k) − ωAe(k) = (I − ωA)e(k).

Thus,

for any vector norm and the corresponding induced matrix norm. Thus, if  the method convergences.

the method convergences.

the method convergences.

the method convergences.Suppose that A is diagonalizable and that (λj,vj) are the eigenvalues and eigenvectors of A. The error converges to 0 if | 1 − ωλj | < 1 for all eigenvalues λj. If, e.g., all eigenvalues are positive, this can be guaranteed if ω is chosen such that 0 < ω < 2 / λmax(A). The optimal choice, minimizing all | 1 − ωλj | , is ω = 2 / (λmin(A) + λmax(A)), which gives the simplest Chebyshev iteration.

If there are both positive and negative eigenvalues, the method will diverge for any ω if the initial error e(0) has nonzero components in the corresponding eigenvectors.

References

- Richardson, L.F. (1910). “The approximate arithmetical solution by finite differences of physical problems involving differential equations, with an application to the stresses in a masonry dam”.Philos. Trans. Roy. Soc. London Ser. A 210: 307–357.

- Vyacheslav Ivanovich Lebedev (2002). “Chebyshev iteration method”. Springer. Retrieved 2010-05-25. Appeared in Encyclopaedia of Mathematics (2002), Ed. by Michiel Hazewinkel, Kluwer – ISBN 1402006098

-

Extremal polynomials with application to Richardson iteration for indefinite linear systems (Technical summary report / Mathematics Research Center, University of Wisconsin–Madison)

Excellent site. A lot of useful information here. Iˇm sending it to some friends ans also sharing in delicious. And certainly, thanks for your effort!